Two servicemen sit in an underground missile launch facility. Before them is a matrix of buttons and bulbs glowing red, white and green. Old-school screens with blocky, all-capped text beam beside them. Their job is to be ready, at any time, to launch a nuclear strike. Suddenly, an alarm sounds. The time has come for them to shoot their deadly weapon.

With the correct codes input, the doors to the missile silo open, pointing a bomb at the sky. Sweat shines on their faces. For the missile to fly, both must turn their keys. But one of them balks. He picks up the phone to call their superiors.

That’s not the procedure, says his partner. “Screw the procedure,” the dissenter says. “I want somebody on the goddamn phone before I kill 20 million people.”

Soon, the scene — which opens the 1983 techno-thriller “WarGames” — transitions to another set deep inside Cheyenne Mountain, a military outpost buried beneath thousands of feet of Colorado granite. It exists in real life and is dramatized in the movie.

In “WarGames,” the main room inside Cheyenne Mountain hosts a wall of screens that show the red, green and blue outlines of continents and countries, and what’s happening in the skies above them. There is not, despite what the servicemen have been led to believe, a nuclear attack incoming: The alerts were part of a test sent out to missile commanders to see whether they would carry out orders. All in all, 22% failed to launch.

“Those men in the silos know what it means to turn the keys,” says an official inside Cheyenne Mountain. “And some of them are just not up to it.” But he has an idea for how to combat that “human response,” the impulse not to kill millions of people: “I think we ought to take the men out of the loop,” he says.

From there, an artificially intelligent computer system enters the plotline and goes on to cause nearly two hours of potentially world-ending problems.

Discourse about the plot of “WarGames” usually focuses on the scary idea that a computer nearly launches World War III by firing off nuclear weapons on its own. But the film illustrates another problem that has become more trenchant in the 40 years since it premiered: The computer displays fake data about what’s going on in the world. The human commanders believe it to be authentic and respond accordingly.

In the real world, countries — or rogue actors — could use fake data, inserted into genuine data streams, to confuse enemies and achieve their aims. How to deal with that possibility, along with other consequences of incorporating AI into the nuclear weapons sphere, could make the coming years on Earth more complicated.

The word “deepfake” didn’t exist when “WarGames” came out, but as real-life AI grows more powerful, it may become part of the chain of analysis and decision-making in the nuclear realm of tomorrow. The idea of synthesized, deceptive data is one AI issue that today’s atomic complex has to worry about.

You may have encountered the fruits of this technology in the form of Tom Cruise playing golf on TikTok, LinkedIn profiles for people who have never inhabited this world or, more seriously, a video of Ukrainian President Volodymyr Zelenskyy declaring the war in his country to be over. These are deepfakes — pictures or videos of things that never happened, but which can look astonishingly real. It becomes even more vexing when AI is used to create images that attempt to depict things that are indeed happening. Adobe recently caused a stir by selling AI-generated stock photos of violence in Gaza and Israel. The proliferation of this kind of material (alongside plenty of less convincing stuff) leads to an ever-present worry any image presented as fact might actually have been fabricated or altered.

It may not matter much whether Tom Cruise was really out on the green, but the ability to see or prove what’s happening in wartime — whether an airstrike took place at a particular location or whether troops or supplies are really amassing at a given spot — can actually affect the outcomes on the ground.

Similar kinds of deepfake-creating technologies could be used to whip up realistic-looking data — audio, video or images — of the sort that military and intelligence sensors collect and that artificially intelligent systems are already starting to analyze. It’s a concern for Sharon Weiner, a professor of international relations at American University. “You can have someone trying to hack your system not to make it stop working, but to insert unreliable data,” she explained.

James Johnson, author of the book “AI and the Bomb,” writes that when autonomous systems are used to process and interpret imagery for military purposes, “synthetic and realistic-looking data” can make it difficult to determine, for instance, when an attack might be taking place. People could use AI to gin up data designed to deceive systems like Project Maven, a U.S. Department of Defense program that aims to autonomously process images and video and draw meaning from them about what’s happening in the world.

AI’s role in the nuclear world isn’t yet clear. In the U.S., the White House recently issued an executive order about trustworthy AI, mandating in part that government agencies address the nuclear risks that AI systems bring up. But problem scenarios like some of those conjured by “WarGames” aren’t out of the realm of possibility.

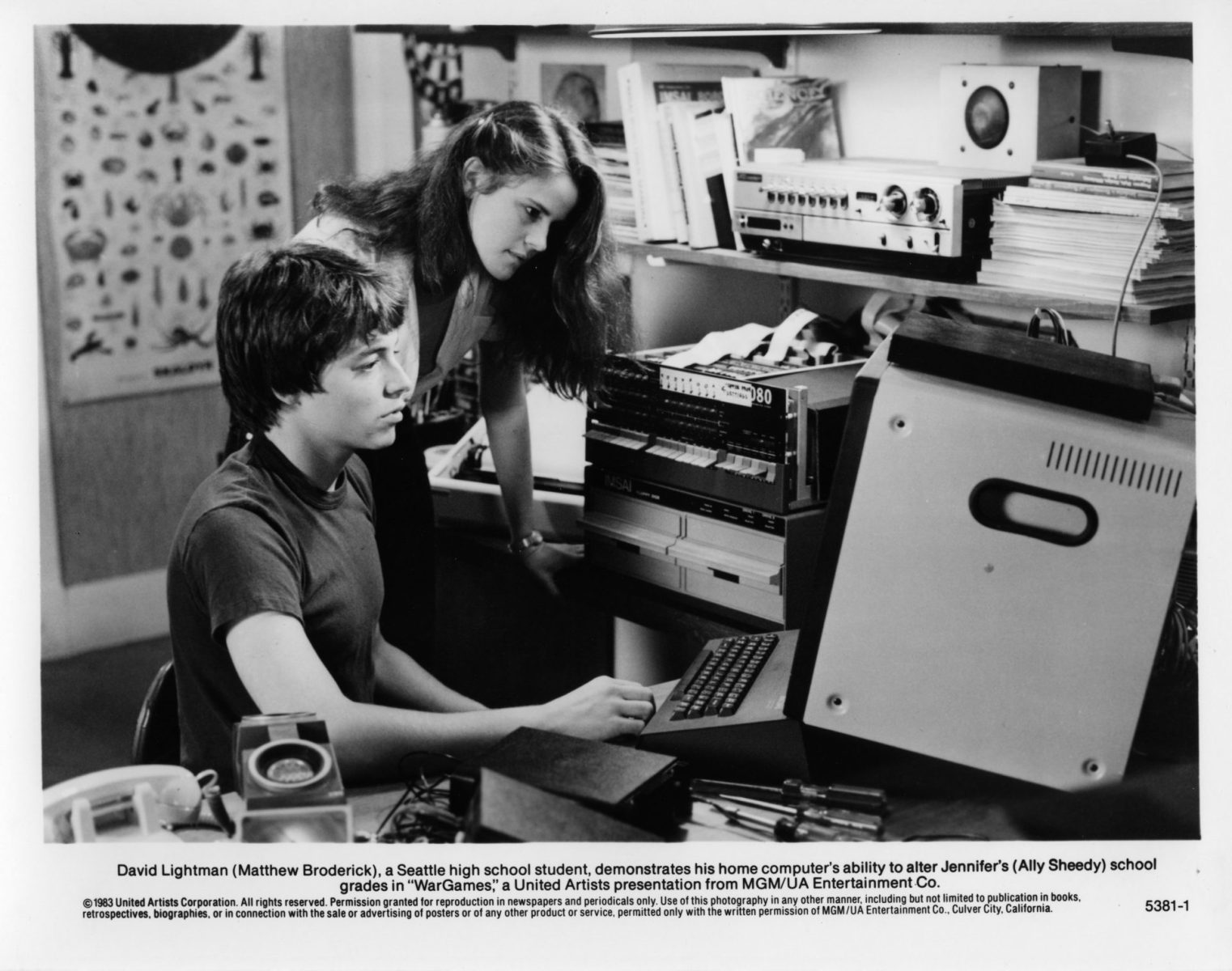

In the film, a teenage hacker taps into the military’s system and starts up a game he finds called “Global Thermonuclear War.” The computer displays the game data on the screens inside Cheyenne Mountain, as if it were coming from the ground. In the Rocky Mountain war room, a siren soon blares: It looks like Soviet missiles are incoming. Luckily, an official runs into the main room in a panic. “We’re not being attacked,” he yells. “It’s a simulation!””

In the real world, someone might instead try to cloak an attack with deceptive images that portray peace and quiet.

Researchers have already shown that the general idea behind this is possible: Scientists published a paper in 2021 on “deepfake geography,” or simulated satellite images. In that milieu, officials have worried about images that might show infrastructure in the wrong location or terrain that’s not true to life, messing with military plans. Los Alamos National Laboratory scientists, for instance, made satellite images that included vegetation that wasn’t real and showed evidence of drought where the water levels were fine, all for the purposes of research. You could theoretically do the same for something like troop or missile-launcher movement.

AI that creates fake data is not the only problem: AI could also be on the receiving end, tasked with analysis. That kind of automated interpretation is already ongoing in the intelligence world, although it’s unclear specifically how it will be incorporated into the nuclear sphere. For instance, AI on mobile platforms like drones could help process data in real time and “alert commanders of potentially suspicious or threatening situations such as military drills and suspicious troop or mobile missile launcher movements,” writes Johnson. That processing power could also help detect manipulation because of the ability to compare different datasets.

But creating those sorts of capabilities can help bad actors do their fooling. “They can take the same techniques these AI researchers created, invert them to optimize deception,” said Edward Geist, an analyst at the RAND Corporation. For Geist, deception is a “trivial statistical prediction task.” But recognizing and countering that deception is where the going gets tough. It involves a “very difficult problem of reasoning under uncertainty,” he told me. Amid the generally high-stakes feel of global dynamics, and especially in conflict, countries can never be exactly sure what’s going on, who’s doing what, and what the consequences of any action may be.

There is also the potential for fakery in the form of data that’s real: Satellites may accurately display what they see, but what they see has been expressly designed to fool the automated analysis tools.

As an example, Geist pointed to Russia’s intercontinental ballistic missiles. When they are stationary, they’re covered in camo netting, making them hard to pick out in satellite images. When the missiles are on the move, special devices attached to the vehicles that carry them shoot lasers toward detection satellites, blinding them to the movement. At the same time, decoys are deployed — fake missiles dressed up as the real deal, to distract and thwart analysis.

“The focus on using AI outstrips or outpaces the emphasis put on countermeasures,” said Weiner.

Given that both physical and AI-based deception could interfere with analysis, it may one day become hard for officials to trust any information — even the solid stuff. “The data that you’re seeing is perfectly fine. But you assume that your adversary would fake it,” said Weiner. “You then quickly get into the spiral where you can’t trust your own assessment of what you found. And so there’s no way out of that problem.”

From there, it’s distrust all the way down. “The uncertainties about AI compound the uncertainties that are inherent in any crisis decision-making,” said Weiner. Similar situations have arisen in the media, where it can be difficult for readers to tell if a story about a given video — like an airstrike on a hospital in Gaza, for instance — is real or in the right context. Before long, even the real ones leave readers feeling dubious.

More than a century ago, Alfred von Schlieffen, a German war planner, envisioned the battlefield of the future: a person sitting at a desk with telephones splayed across it, ringing in information from afar. This idea of having a godlike overview of conflict — a fused vision of goings-on — predates both computers and AI, according to Geist.

Using computers to synthesize information in real-time goes back decades too. In the 1950s, for instance, the U.S. built the Continental Air Defense Command, which relied on massive machines (then known as computers) for awareness and response. But tests showed that a majority of Soviet bombers would have been able to slip through — often because they could fool the defense system with simple decoys. “It was the low-tech stuff that really stymied it,” said Geist. Some military and intelligence officials have concluded that next-level situational awareness will come with just a bit more technological advancement than they previously thought — although this has not historically proven to be the case. “This intuition that people have is like, ‘Oh, we’ll get all the sensors, we’ll buy a big enough computer and then we’ll know everything,’” he said. “This is never going to happen.”

This type of thinking seems to be percolating once again and might show up in attempts to integrate AI in the near future. But Geist’s research, which he details in his forthcoming book “Deterrence Under Uncertainty: Artificial Intelligence and Nuclear Warfare,” shows that the military will “be lucky to maintain the degree of situational awareness we have today” if they incorporate more AI into observation and analysis in the face of AI-enhanced deception.

“One of the key aspects of intelligence is reasoning under uncertainty,” he said. “And a conflict is a particularly pernicious form of uncertainty.” An AI-based analysis, no matter how detailed, will only ever be an approximation — and in uncertain conditions there’s no approach that “is guaranteed to get an accurate enough result to be useful.”

In the movie, with the proclamation that the Soviet missiles are merely simulated, the crisis is temporarily averted. But the wargaming computer, unbeknownst to the authorities, is continuing to play. As it keeps making moves, it displays related information about the conflict on the big screens inside Cheyenne Mountain as if it were real and missiles were headed to the States.

It is only when the machine’s inventor shows up that the authorities begin to think that maybe this could all be fake. “Those blips are not real missiles,” he says. “They’re phantoms.”

To rebut fake data, the inventor points to something indisputably real: The attack on the screens doesn’t make sense. Such a full-scale wipeout would immediately prompt the U.S. to total retaliation — meaning that the Soviet Union would be almost ensuring its own annihilation.

Using his own judgment, the general calls off the U.S.’s retaliation. As he does so, the missiles onscreen hit the 2D continents, colliding with the map in circular flashes. But outside, in the real world, all is quiet. It was all a game. “Jesus H. Christ,” says an airman at one base over the comms system. “We’re still here.”

Similar nonsensical alerts have appeared on real-life screens. Once, in the U.S., alerts of incoming missiles came through due to a faulty computer chip. The system that housed the chip sent erroneous missile alerts on multiple occasions. Authorities had reason to suspect the data was likely false. But in two instances, they began to proceed as if the alerts were real. “Even though everyone seemed to realize that it’s an error, they still followed the procedure without seriously questioning what they were getting,” said Pavel Podvig, senior researcher at the United Nations Institute for Disarmament Research and a researcher at Princeton University.

In Russia, meanwhile, operators did exercise independent thought in a similar scenario, when an erroneous preliminary launch command was sent. “Only one division command post actually went through the procedure and did what they were supposed to do,” he said. “All the rest said, ‘This has got to be an error,’” because it would have been a surprise attack not preceded by increasing tension, as expected. It goes to show, Podvig said, “people may or may not use their judgment.”

You can imagine in the near future, Podvig continued, nuclear operators might see an AI-generated assessment saying circumstances were dire. In such a situation, there is a need “to instill a certain kind of common sense” he said, and make sure that people don’t just take whatever appears on a screen as gospel. “The basic assumptions about scenarios are important too,” he added. “Like, do you assume that the U.S. or Russia can just launch missiles out of the blue?”

People, for now, will likely continue to exercise judgment about attacks and responses — keeping, as the jargon goes, a “human in the loop.”

The idea of asking AI to make decisions about whether a country will launch nuclear missiles isn’t an appealing option, according to Geist, though it does appear in movies a lot. “Humans jealously guard these prerogatives for themselves,” Geist said.

“It doesn’t seem like there’s much demand for a Skynet,” he said, referencing another movie, “Terminator,” where an artificial general superintelligence launches a nuclear strike against humanity.

Podvig, an expert in Russian nuclear goings-on, doesn’t see much desire for autonomous nuclear operations in that country.

“There is a culture of skepticism about all this fancy technological stuff that is sent to the military,” he said. “They like their things kind of simple.”

Geist agreed. While he admitted that Russia is not totally transparent about its nuclear command and control, he doesn’t see much interest in handing the reins to AI.

China, of course, is generally very interested in AI, and specifically in pursuing artificial general intelligence, a type of AI which can learn to perform intellectual tasks as well as or even better than humans can.

William Hannas, lead analyst at the Center for Security and Emerging Technology at Georgetown University, has used open-source scientific literature to trace developments and strategies in China’s AI arena. One big development is the founding of the Beijing Institute for General Artificial Intelligence, backed by the state and directed by former UCLA professor Song-Chun Zhu, who has received millions of dollars of funding from the Pentagon, including after his return to China.

Hannas described how China has shown a national interest in “effecting a merger of human and artificial intelligence metaphorically, in the sense of increasing mutual dependence, and literally through brain-inspired AI algorithms and brain-computer interfaces.”

“A true physical merger of intelligence is when you’re actually lashed up with the computing resources to the point where it does really become indistinguishable,” he said.

That’s relevant to defense discussions because, in China, there’s little separation between regular research and the military. “Technological power is military power,” he said. “The one becomes the other in a very, very short time.” Hannas, though, doesn’t know of any AI applications in China’s nuclear weapons design or delivery. Recently, U.S. President Joe Biden and Chinese President Xi Jinping met and made plans to discuss AI safety and risk, which could lead to an agreement about AI’s use in military and nuclear matters. Also, in August, regulations on generative AI developed by China’s Cyberspace Administration went into effect, making China a first mover in the global race to regulate AI.

It’s likely that the two countries would use AI to help with their vast streams of early-warning data. And just as AI can help with interpretation, countries can also use it to skew that interpretation, to deceive and obfuscate. All three tasks are age-old military tactics — now simply upgraded for a digital, unstable age.

Science fiction convinced us that a Skynet was both a likely option and closer on the horizon than it actually is, said Geist. AI will likely be used in much more banal ways. But the ideas that dominate “WarGames” and “Terminator” have endured for a long time.

“The reason people keep telling this story is it’s a great premise,” said Geist. “But it’s also the case,” he added, “that there’s effectively no one who thinks of this as a great idea.”

It’s probably so resonant because people tend to have a black-and-white understanding of innovation. “There’s a lot of people very convinced that technology is either going to save us or doom us,” said Nina Miller, who formerly worked at the Nuclear Threat Initiative and is currently a doctoral student at the Massachusetts Institute of Technology. The notion of an AI-induced doomsday scenario is alive and well in the popular imagination and also has made its mark in public-facing discussions about the AI industry. In May, dozens of tech CEOs signed an open letter declaring that “mitigating the risk of extinction from AI should be a global priority,” without saying much about what exactly that means.

But even if AI does launch a nuclear weapon someday (or provide false information that leads to an atomic strike), humans still made the decisions that led us there. Humans created the AI systems and made choices about where to use them.

And, besides, in the case of a hypothetical catastrophe, AI didn’t create the environment that led to a nuclear attack. “Surely the underlying political tension is the problem,” said Miller. And that is thanks to humans and their desire for dominance — or their motivation to deceive.

Maybe the humans need to learn what the computer did at the end of “WarGames.” “The only winning move,” it concludes, “is not to play.”