The sudden suspension of a controversial multi-million dollar surveillance system used by several government agencies in Utah has opened up a debate about the lack of oversight for artificial intelligence systems in law enforcement.

Last week, the Utah Attorney General’s office suspended a $20.7 million contract with Banjo — a technology firm using government surveillance data to develop crime detection software — following revelations of the founder’s past membership of a white supremacist group.

Damien Patton, who serves as CEO of the SoftBank-backed company, was reportedly an active member of the Ku Klux Klan as a teenager, and participated in a 1990 drive-by shooting of a synagogue in suburban Nashville, according to the tech blog OneZero.

In a statement, a spokesperson for Utah Attorney General Sean Reyes said the office would be moving forward an already planned third-party audit of the software to “address issues like data privacy and possible bias.” Reyes recommended that other state agencies do the same.

“The Utah Attorney General’s office is shocked and dismayed at reports that Banjo’s founder had any affiliation with any hate group or groups in his youth,” said the statement. “Neither the AG nor anyone in the AG’s office were aware of these affiliations or actions. They are indefensible.”

According to documents obtained by Coda Story under Utah’s Government Records Access and Management Act, Banjo’s contract with the state gave the company live access to an unprecedented number of government data streams, including 911 calls, traffic and CCTV cameras, and location data for state vehicles.

Banjo’s real-time access to this vast amount of information employs artificial intelligence to alert first responders in dozens of agencies across Utah to crimes and other public safety threats as they happen. Before the suspension of the contract, the company’s technology was already in use by all of the state’s 29 counties, at least 23 cities and even campus police at the University of Utah, Motherboard reported last month.

The Utah Department of Public Safety said in a statement that it is also conducting a review of Banjo’s technology, though it and other agencies could not unilaterally suspend their agreements with the company.

“We have not suspended the current contract because the contract is with the Department of Administrative Services Division of Purchasing. We are discussing and reviewing the matter with them,” a spokesperson wrote.

Banjo announced last Wednesday that it had suspended all of the company’s data collection operations in Utah.

The revelations about Patton’s past ties to the white supremacist movement have prompted new calls for transparency in how artificial intelligence is used by law enforcement. Several prominent Utah lawmakers called for a public audit of Banjo’s contract long before this week’s news. They have now renewed their push for increased oversight of state data sharing with private companies.

“I’ve been one of the only people asking questions about this for years now, and nobody seemed to know what the answers are — for so long I thought I was crazy,” said Democratic Utah Representative Angela Romero, who sits on the state’s Law Enforcement and Criminal Justice Committee. “A lot of really powerful people seem to have a vested interest in keeping the details of this program a secret.”

Until this week’s revelations, Patton’s colorful past was a selling point for the company in an industry noted for its culture of founder-worship. Known for his long beard and eccentric dress sense, Patton’s story starts as a homeless teenage runaway. His roundabout career also includes stints in the U.S. Navy, as a NASCAR pit mechanic and a crime scene investigator.

This tale has been told in magazines, newspapers and in pitch meetings and introductions at technology conferences for nearly a decade.

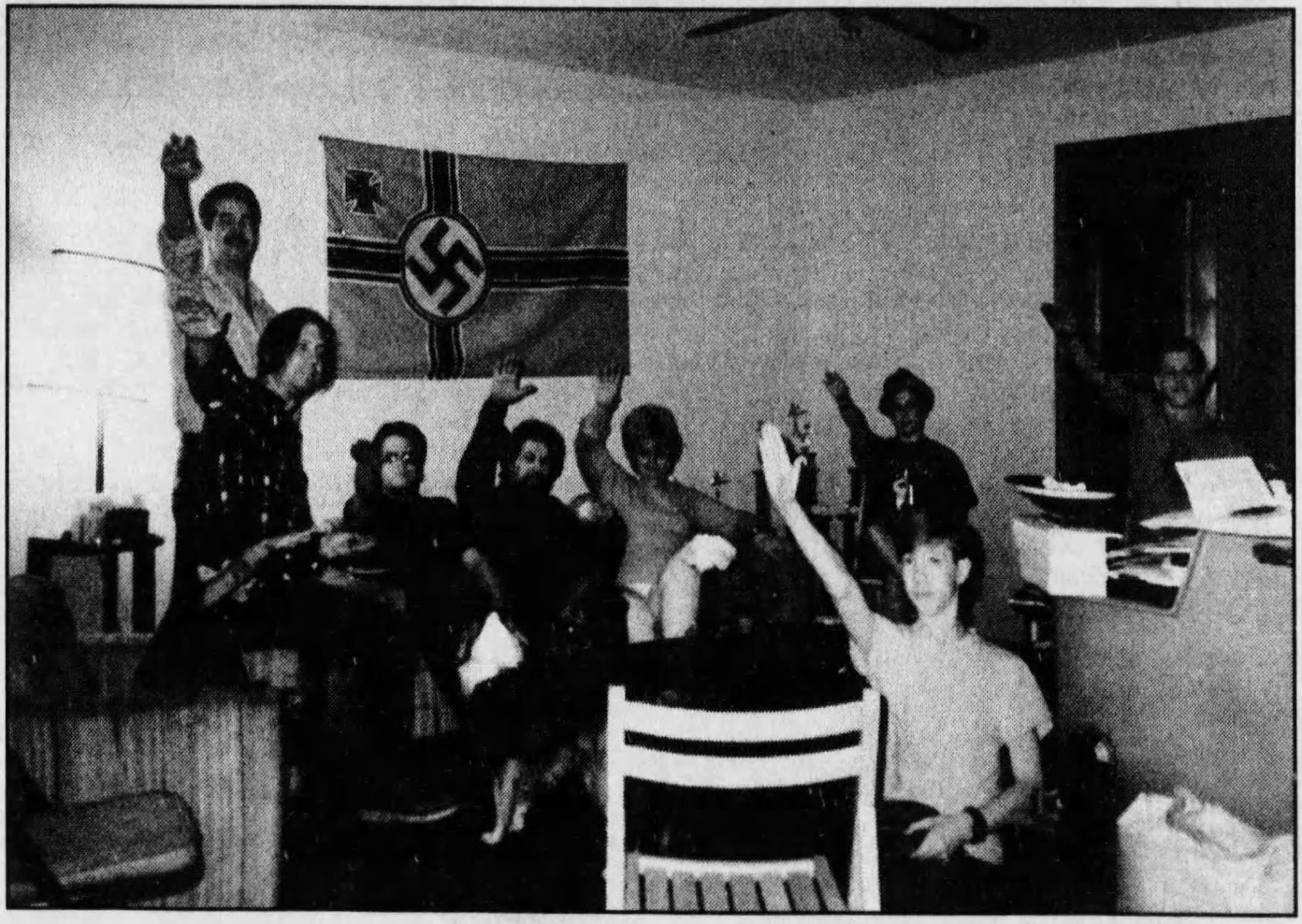

But there is also a darker side to it. OneZero, which reviewed thousands of pages of public court documents, wrote that Patton was also involved with white supremacist groups, and, at 17 years old, party to a synagogue shooting.

According to court records, Patton was driving the vehicle on the day a Klan leader shot out the windows of the West End Synagogue in Nashville, Tennessee. No one was injured in the incident. OneZero reported that two Klansmen were later convicted of crimes connected to the shooting and Patton pleaded guilty to acts of related juvenile delinquency.

Patton also testified at the trial about his beliefs at the time. “We believe that the blacks and the Jews are taking over America, and it’s our job to take America back for the white race,” he said.

Artificial intelligence and bias

The details of Patton’s past highlight the importance of oversight in the writing of surveillance algorithms so as to ensure that they are as unbiased as possible, says Michael German, a fellow at the Brennan Center for Justice’s Liberty & National Security Program, in a telephone interview.

“When information like this is brought to light, it also brings reform,” he said. “Unfortunately, law enforcement agencies often hide how their systems work, and the only opportunity for reform is a good deal later, when we have the data to back it up.”

Ties to white nationalist ideology and alt-right groups have marred the reputations of several law enforcement technology firms recently. The founder of Clearview AI, the facial recognition startup used by more than 600 U.S. law enforcement agencies, including Immigration and Customs Enforcement, Customs and Border Protection, was recently found to have links to far-right figures, including Mike Cernovich, who spearheaded the “Pizzagate” conspiracy theory, and Andrew “weev” Auernheimer, webmaster of the neo-Nazi website “The Daily Stormer.” Several Clearview AI employees were also exposed for sharing extremist content and attending events with other white nationalists, including alt-right figurehead Richard Spencer.

Peter Thiel, who sits on the board of Facebook and runs the data mining giant Palantir, is also reported to be linked to several alt-right figures, including the former Breitbart writer Milo Yiannopoulos.

While these companies and individuals have written off criticism of their connections to far-right and white nationalist groups as partisan attacks, such exposures point to a need to examine biases in AI systems, says German.

“Any system made by human beings will mirror or even amplify the biases that already exist in both law enforcement and society,” he said.