Back in November 2018, Taiwan was targeted by a massive disinformation campaign. The aim of this effort, widely attributed to Beijing, was to influence midterm election results on the island, which China has claimed as part of its own territory since the Chinese Communist Party came to power in 1949.

Many political analysts now believe that this interference played a role in the shock victory of the pro-China candidate Han Kuo-yu as mayor of Kaohsiung, Taiwan’s second largest city. That result was so unexpected that it drew comparisons to the election of President Donald Trump in the United States. Once the election was over, researchers and Taiwanese officials evidenced that much of the disinformation, distributed via content mills, fake accounts, and the use of bots, originated in China.

In January 2019, V-Dem Institute, an independent research institute based in Sweden, declared Taiwan one of a few democracies most affected by disinformation. These incursions have continued throughout the nation’s January 2020 presidential election and the subsequent coronavirus pandemic.

According to the Taiwan-based disinformation research center Doublethink Lab, China’s strategy has evolved. While previous disinformation campaigns focused on open social networks — including the Reddit-like message board PTT, Facebook, and Twitter — they have now shifted towards a popular Tokyo-based messaging app named Line.

Line is similar in function to China’s WeChat or WhatsApp. In 2019, the company reported 194 million monthly users around the world. Eighteen million of them are in Taiwan — a country of just under 24 million people. It is also the most popular messaging app in Japan and Thailand.

“There is no more important platform in how Taiwanese communicate with each other or interact in a social way online,” Nick Monaco, research director at the California-based Institute for the Future’s Digital Intelligence Lab, told me, during a recent telephone conversation.

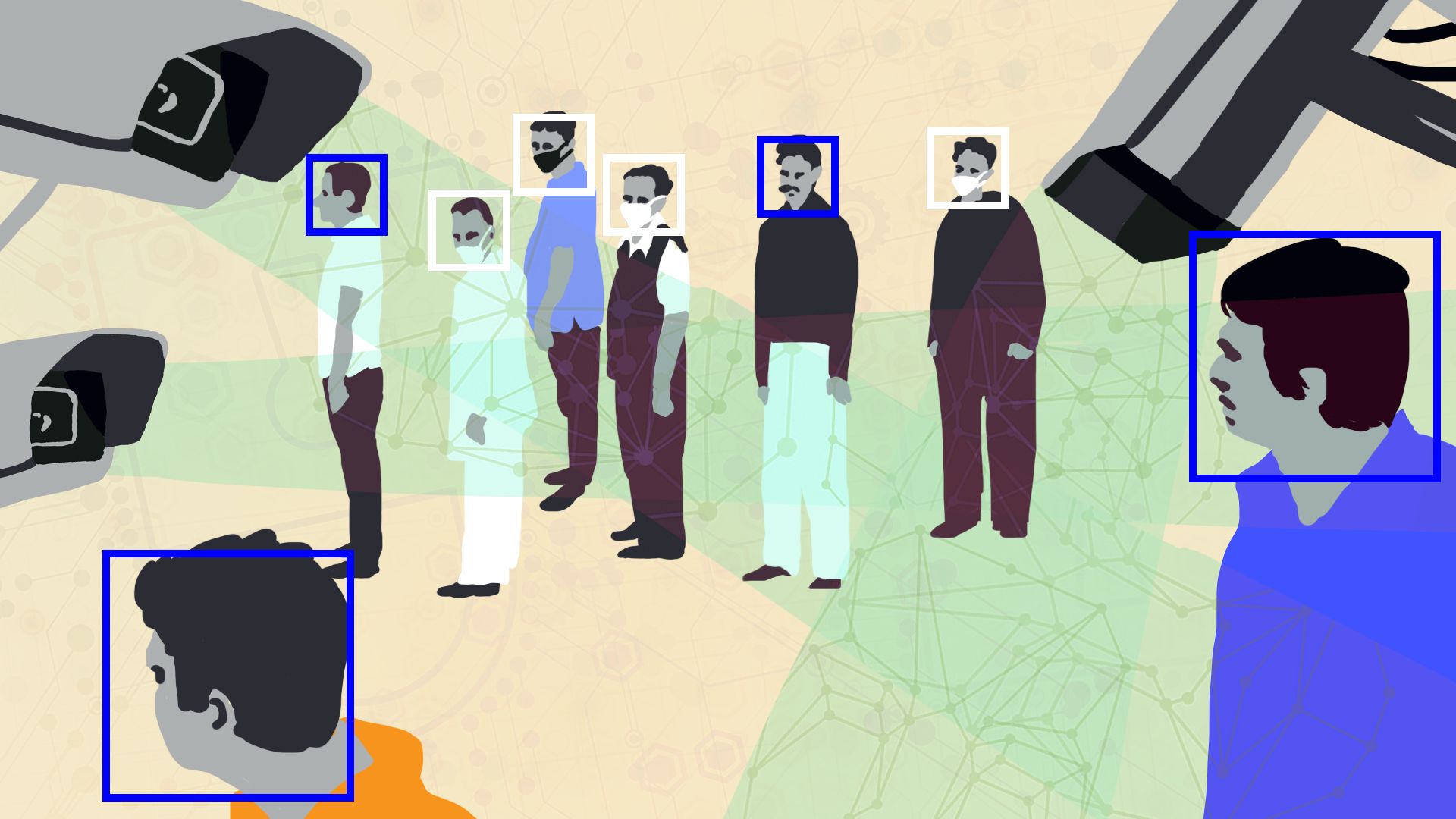

Owing to its very nature, Line presents a number of challenges for researchers and journalists seeking to understand and combat disinformation in Taiwan.

“Line is relatively closed in comparison to Facebook and PTT,” said Monaco. “You can’t often see where messages came from, how popular they are, and how much they are spreading.”

Last month, an Institute for the Future report identified an obscure Malaysia-based operation named the Qiqi News Network as playing a key role in the creation and spread of Covid-19 disinformation, via Line groups and activity on a number of other platforms. Monaco believes that Qiqi’s use of mainland-specific Chinese phrasing and its frequent promotion of content produced by Chinese state-owned media offer evidence of a connection to Beijing.

Some messages linked to Qiqi and other unidentified sources have also downplayed or cast doubt on foreign reporting of Uyghur human rights abuses in Xinjiang, accused the Taiwanese government of hiding the scale of the coronavirus pandemic and questioned the academic background of Taiwan’s President Tsai Ing-wen.

Chat bots to counter disinformation

Around the world, independent fact-checking and academic research are viewed as some of the most crucial lines of defense against disinformation.

Johnson Hsiang-Sheng Liang, however, is concerned about the limitations of this approach. He believes that the reach and influence of the media and educational institutions can’t compete with that of hugely popular chat apps like Line.

“Personally I often receive hoaxes from Line groups,” said Liang during a series of online messages. “Finding information to reply to them every time is pretty time consuming.”

Liang is a Taiwan-based hacker with a background in electrical engineering and computer science. In 2017, he and local social worker Billion Lee got together to found CoFacts — a decentralized, open-source project staffed by volunteers.

One of Line’s features is that it allows verified users to create chat bots. CoFacts decided to exploit this aspect of its functionality to counter fake news and disinformation. It built its own chatbot, to which users can send suspect posts. Once it receives a message, the bot analyzes it to see if it matches anything it has previously received. If so, the bot sends an automated response, saying whether the message should be trusted or not. If the content of the message has not been seen before, it is sent to a team of volunteer fact-checkers.

Line also uses CoFacts’ findings and content on its own official fact-checking account. The Institute for the Future has also used information from the CoFacts database in its research.

Risks in other markets

While Taiwan’s difficult relationship with China poses unique geopolitical challenges, it is not the only country in East Asia dealing with disinformation spread via Line.

“China obviously has a strategic interest in the greater East and Southeast Asia regions,” said Monaco, adding that the platform “may be used in other places” to disseminate fake news and propaganda.

In Thailand, the media watchdog Sure and Share Center has been monitoring disinformation on the app since 2015. Until recently, the main misleading stories it encountered were related to matters of health.

“The source of the disinformation often came from people who want to sell something — a herbal medicine or supplement,” said Peerapon Anutarasoat, one of the organization’s fact-checkers.

Even in the age of coronavirus, this is changing. Student-led protests that began in July against the Thai military’s powerful role in government have led to an increase in political disinformation on Line. Recent examples include a widely shared story claiming that the U.S. government was funding activists and demonstrations.

“Political misinformation is seasonal,” said Anutarasoat. “Now, we have protests in Thailand, so there is a lot of information and misinformation that makes people confused.”

Anutarasoat fears that closed channels such as Line help to create hard-to-penetrate echo chambers in which disinformation thrives. He also believes that Line is taking fewer steps to address these issues in Thailand than it has in Taiwan.

“Thailand is Line’s number-two market, but we cannot find many efforts around disinformation,” he said.

In Japan — Line’s largest market, with 80 million active users — the app is not seen as a major vector for disinformation. This can largely be attributed to media consumption habits within the nation, where, according to a 2020 Reuters Institute study, print newspapers still dominate and online sharing of news lags far behind other Asian countries.

“Misinformation there isn’t a big problem,” said Masato Kajimoto, a professor of media studies at Hong Kong University, during a telephone conversation. “If you look at research, people in Japan tend to be less willing to share political news with certain viewpoints.”

He will be watching closely, though, as sudden domestic unrest, or a shift in relations with China could significantly alter the nation’s information landscape.

For now, observers believe that since being hit by a campaign in 2018, Taiwan has responded well to the challenges posed by external disinformation. CoFact’s Line bot and the work of organizations such as Doublethink Lab have all played a part in minimizing the risk posed by fake news and conspiracy theories.

Kajimoto sees this is largely a result of widespread sharing of knowledge about disinformation and its proliferation.

When it comes to fake news, he believes that the majority of Taiwanese people are “aware of potential threats from mainland China.” This, he partly attributes to the growing visibility of organizations dedicated to providing reliable information to an increasing number of individuals and institutions.

“Even if the proportion of people who use fact-checking platforms like CoFacts is not high, everyone knows they exist now,” he said.