Diogo Pacheco is a bot-hunter. For the uninitiated, bots are the ground troops of social media, autonomous accounts that post, tweet, or retweet. Some bots are harmless: sharing pictures of cats or jokes. Others, however, look like actual users and can engage in political or disinformation campaigns. With the University of Exeter and the Observatory on Social Media and Center for Complex Networks and Systems Research at Indiana University, Pacheco studies inauthentic activity online and examines how fake conversations and coordinated online networks can change our opinions and the way we think. Bots and fake accounts, which have invaded online conversations on everything from the coronavirus vaccines to the human rights crisis in Xinjiang, have been at the center of the debate about disinformation since President Trump was elected in 2016 and the UK’s Brexit referendum in the same year.

I spoke to Pacheco about how he tracks bots and fake social media behavior. This interview has been edited for length and clarity.

Coda: How would you describe your job to someone who’s not in the bot world?

Diogo Pacheco: I try to be like a — not a spy, but an investigator. How can I try to exploit and unveil bad actors? I’m trying to do reverse engineering. I start with the assumption that there are bad guys out there, and I’m just trying to find them.

Coda: Why should people worry or care about bad actors, bots and automated information campaigns?

DP: So the problem is not necessarily having bots. There are bots that are benign, they do good stuff, they explain things for people and make information readily available. The problem is when you have someone misleading you, someone trying to impersonate someone else, or trying to amplify some narrative or some discourse. Everything that you interact with, everything you read and you perceive affects your opinion.

Coda: How easy is it to actually figure out what’s going on and whether an account you are looking at is fake?

DP: Bots are evolving, and bot detectors are also evolving. So every time it’s more difficult to detect because the bots get more elaborate. But the original intention is the same. There are people that are trying to exploit the system, trying to amplify their agenda.

Coda: Has disinformation from bots intensified during the pandemic?

DP: Every day we see more and more people distrusting very well known scientific facts. Like, people not believing anymore in the efficacy of vaccines, people believing the earth is flat. It’s unimaginable — how are we going back instead of moving forwards? I think this is in part due to these types of accounts. We have our own bias, our psychological bias. If these accounts manage to get you into their network, it’s hard not to conform with the group.

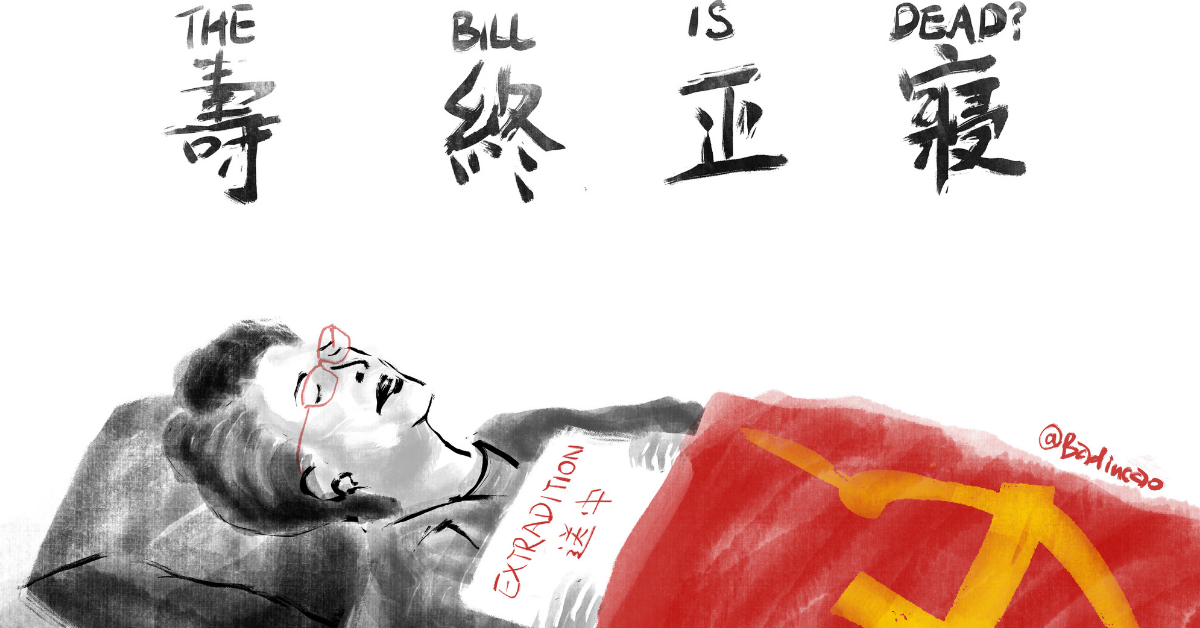

Coda: Tell me about what your research revealed about the Hong Kong protests in 2019.

DP: In that case, we knew there was this huge warfare going on social media. One side was trying to ban and shut down the internet, the other side was trying to organize online. The pro-Hong Kong protest messages were mostly in English, trying to divert international attention towards the problem. On the Chinese side, they were trying to target local people, and there was a clear message to stay home and to keep people away from the streets. On both sides, there was a high bot score on the “botometer.”

Coda: People who work in areas of disinformation and authoritarian technology are now on the lookout for inauthentic behavior online. What will the next generation of bots look like?

Every time, it’s more difficult to detect bots because they become more complex and elaborate, and you have cyborg accounts that are operated by a normal human, and then become automated. The problems arise when you start seeing coordinated action, where there are accounts that are both very human-like and very bot-like. But the idea is the same: there will still be people that are trying to exploit the system, trying to evade or amplify the agenda.