On a breezy but still warm day recently, around 200 international guests gathered inside of Vienna’s Museum of Applied Arts, an imposing, neo-renaissance museum building. The agenda was no less grand than the surroundings: to defend Europe’s citizens from hostile forces outside its borders while turning the region into a global leader for setting standards in tech regulation.

Organized by Austria’s Erste Foundation, which encourages citizens to become active members of society, the conference — “How the EU can take the lead in governing technology globally” — addressed the growing need for the regulation of large technology companies, such as Facebook or Google.

“Trust is lost. Stakes are very high. We need to pull this all into public accountability,” said the keynote speaker, Marietje Schaake, former member of the European Parliament from the Netherlands. Schaake was speaking to the media at the sidelines of the conference.

Silicon Valley’s giants stand accused of a plethora of far-reaching systemic flaws ranging from discriminatory race and gender practices in algorithmic decision making to enabling the use of micro-targeting to spread political propaganda. In the EU, the big tech platforms have come under increasing scrutiny with the bloc’s ground-breaking data protection regulation (GDPR) coming into effect last year.

Europe is already home to the world’s strictest data privacy laws and Schaake is one of the most prominent voices arguing the EU can do much more. Having served as a Member of the European Parliament for a decade, the Dutch politician was behind some of Europe’s key digital initiatives, such as the Digital Agenda for Europe, which was originally conceived in 2010 to speed up the roll-out of high-speed internet in Europe. In 2017, she was singled out by Politico as one of the 28 most influential Europeans. After she resigned at the end of her parliamentary term this year, she was appointed the international policy director of Cyber Policy Center at Stanford University.

Schaake’s argument contends that since companies like Facebook, Twitter or Google have effectively become the guardians of how information flows, people around the world have lost trust in information sources of any kind. She blames the advertising model whereby “paying instead of convincing” determines one’s position in the marketplace of ideas.

“Governing for profit optimization allows blatant conspiracy to rise above news stories in search results,” Schaake said in Vienna. “Companies like Facebook allow their clients to advertise against categories such as ‘Jew Hater’, or ‘Hitler did nothing wrong’.”

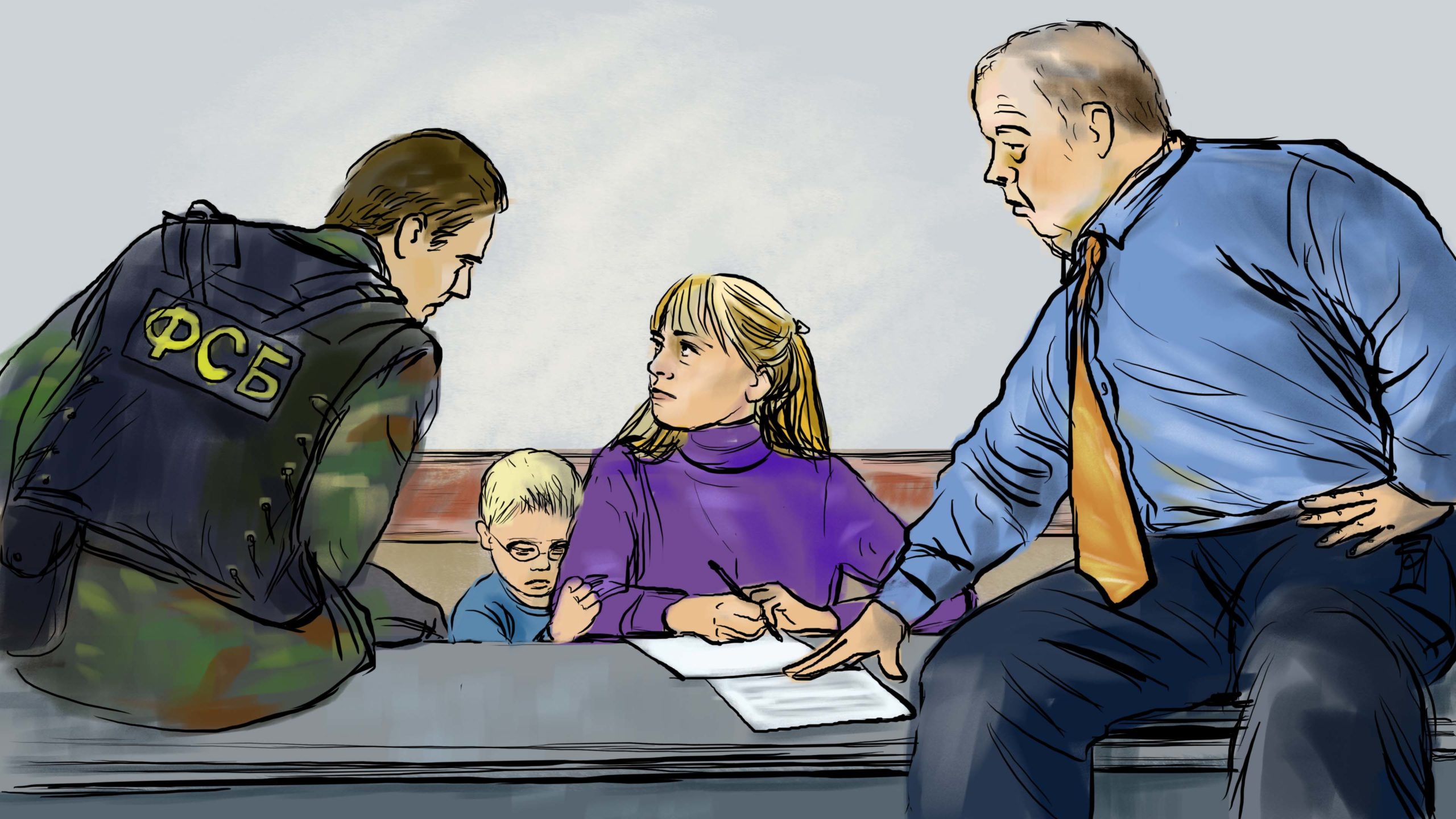

In an effort to counter micro-targeted disinformation campaigns, the European Commission proposed a new Digital Services Act earlier this year. These are mostly connected to Russian online influence operations in Europe. For example, the UK-based impact agency 89up reported that Russia spent up to $5.18 million on attempts to influence British voters to vote Leave in 2016’s UK’s EU membership referendum.

The act is due to be presented at the end of 2020 and is widely expected to contain updated transparency rules on political advertising which would require big tech platforms to subject their algorithms to more stringent forms of regulation.

More recently, the European Commission’s incoming president Ursula Von der Leyen pledged to create “a Europe fit for the digital age” by enhancing the Digital Services Act with proposals including ethical regulations for AI and new taxes for tech companies.

Algorithms and accountability

Many experts in Europe today agree on two areas of regulation that could help alleviate the problem of manipulation on social media and micro-targeting for political goals: Content moderation and holding social media algorithms accountable.

The first would make social media companies liable for the content of their platforms. Algorithmic accountability would then force big tech companies to reveal the formulas they use to collect data and match them with advertisers.

“The debate about intermediary liability is really at the heart of this question. Should companies like YouTube or Twitter be liable for what happens on their platforms?” Schaake asked journalists at the conference.

Katarzyna Szymielewicz from the Polish NGO Fundacja Panoptykon, which has been engaged in legal battles pushing for the implementation of the right to explanation of automated decisions in Poland, sees a clear link between liability and algorithmic accountability.

“Liability doesn’t concern only content. It’s more about the way tech platforms moderate the discussion. The users might create the content, but the companies create the algorithm. They should have certain transparency obligations,” she said.

A host of advocacy groups in Europe, but also increasingly more in the United States, who call for algorithmic accountability of big tech companies demand the introduction of legislation that would make the inner workings of the algorithms used by companies like Facebook or Google transparent.

“It’s not like we want them to reveal all their codes as part of the solution,” said Szymielewicz. “They use hundreds of these algorithms on a daily basis; that would be impossible… We just want them to share with the public the decisions behind building these algorithms, how do they optimize them.”

Schaake says that she has observed a change in the way companies like Facebook engage with the legislators.

She says that just three years ago, Facebook was still in denial that the information flows on the platform could have any impact on countries’ electoral processes, let alone assuming any responsibility for the content shared by its users.

“I remember sitting on a panel on intermediary liability back in 2016. I asked Facebook representatives if they thought their platform could somehow influence the outcome of the upcoming American elections. They told me: ‘don’t watch too many movies’”.

Since then, she says, especially after the 2018 Cambridge Analytica scandal, Facebook is more aware about how the platform can manipulate users.

“They [Facebook] now say they have a lot to learn. They say these things are very complicated, but that they are working on it,” Schaake added. “I think that they are really stressed out about all the pressure they are currently under, so they want to create an impression that they want to cooperate on this, but that’s very far from reality.”

As the global debate about how digital technologies pose serious challenges to the integrity of democratic institutions gathers momentum, new hurdles have emerged. Facebook’s recent announcement that the company will not fact check political ads has led to calls for the voluntary suspension of online political advertising before next month’s UK election. The problems with digital campaigning has also cast a shadow on the 2020 U.S. presidential election.

The issue was given more prominence last week when the actor and comedian Sacha Baron Cohen gave a speech attacking Facebook and other social media platforms for enabling the spread of hate speech and misinformation. Speaking about Facebook’s decision to not fact check political ads, Baron Cohen said that under its own rules, the company would have allowed Adolf Hitler to run propaganda on its platform.

Facebook’s Asia-Pacific Policy Communications Director Clare Wareing said that the company has been working with experts and legislators across Europe on four areas where the company thinks regulation could help.

“We are committed to working with experts and policymakers to shape the rules of the internet and have outlined four areas where we think regulation could help — harmful content, election integrity, privacy and data portability,” she said in an email.

Since Facebook, like other big tech companies, considers its algorithms a trade secret, algorithmic accountability is not among the four areas cited.

However, there have been initiatives on both sides of the Atlantic to release the codes from the so called “black boxes”. Earlier this year, a Democratic member of the U.S. House of Representatives Yvette Clarke introduced the Algorithmic Accountability Act, which would require large tech companies to audit their algorithms for potential abuses of human rights.

Some version of the right to explanation is already enshrined in the EU’s GDPR, which mandates that users are able to ask for the data behind the algorithmic decisions made for them, including advertising programs, and social networks.

Thomas Lohninger, the director of Vienna-based digital rights NGO Epicenter.works and a former policy advisor for European Digital Rights, says that the EU should be much more confident in going beyond the existing regulation.

“Sadly, our politicians often feel like they are powerless when it comes to Google or Facebook,” he said. “But we have seen that the past two legislative terms in the EU have woken up to these issues.”

After the wave of misinformation campaigns during the last EU elections, there is an appetite in Brussels to go beyond the existing regulation. The European Commission is currently carrying out an in-depth analysis into algorithmic transparency. The Commission will engage with experts, academics, industry, civil society and policy-makers throughout the project.

“Two studies are currently under evaluation, focusing in particular on governance consideration, oversight and algorithmic auditing technologies,” the EU’s Digital Single Market spokesperson Inga Höglund said in an email. The results of the study are expected to be used in policy-making decisions about algorithms

“Preliminary work on the project has already informed several policy steps taken by the Commission, such as regulation on ‘platform-to-business’ trading practices, which establishes an obligation on online platforms and search engines to be transparent about the general parameters which determine online ranking, she added.

Implementation requires architecture

Joanna Goodey who heads the Freedom and Justice department at the EU’s Agency for Fundamental Rights says that, whenever the forthcoming Digital Services Act is eventually enacted, the key thing will be its implementation.

“You can have the best law drafted in the world, but the reality is the implementation,” she said during a panel discussion at the conference. “Do we have an architecture strong enough in the EU? One can argue that the data protection authorities have been grossly under-resources to deal with the GDPR.”

Schaake says that so far it has been the tech companies who have shown more initiative in setting rules and standards.

“As soon as anti-trust investigations were announced in Europe and the U.S., we’ve seen tech companies hiring more lobbyists for their campaigns,” she said. “They are also funding a lot of civil society organizations, as well as many academic programs, that are working on tech policy.”

She continued: “The question is then not whether we will see rules and regulation, but who is in charge of it and based on which principles and desired outcome.”