Between autonomous police dog robots, facial recognition cameras that let you pay for groceries with your smile and bots that can write Wordsworthian sonnets in the style of Taylor Swift, it is beginning to feel like AI can do just about anything. This week, a new capability has been added to the list: A group of researchers in Switzerland say they’ve developed an AI model that can tell if you’re gay or straight.

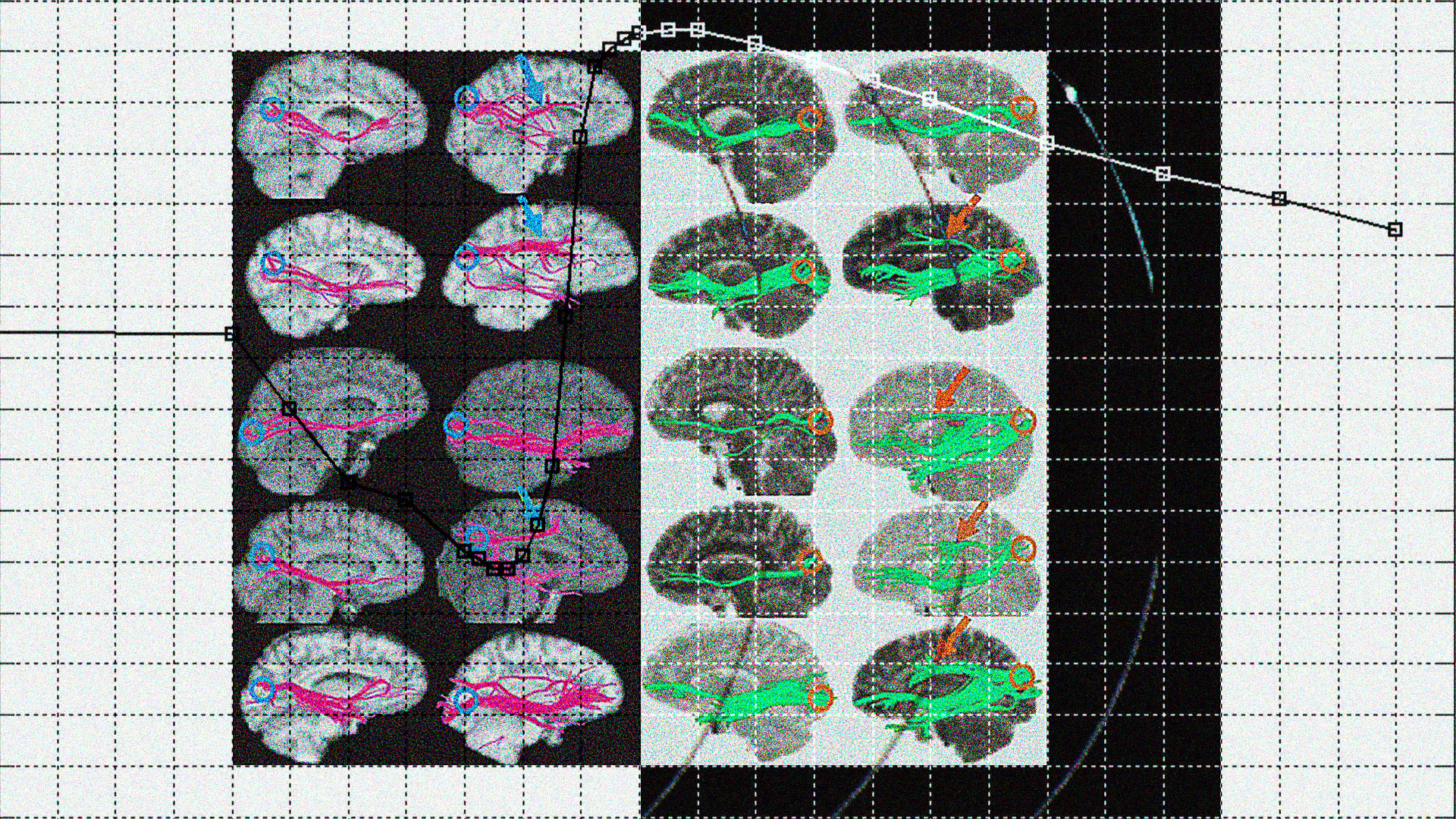

The group has built a deep learning AI model that they say, in their peer-reviewed paper, can detect the sexual orientation of cisgender men. The researchers report that by studying subjects’ electrical brain activity, the model is able to differentiate between homosexual and heterosexual men with an accuracy rate of 83%.

“This study shows that electrophysiological trait markers of male sexual orientation can be identified using deep learning,” the researchers write, adding that their findings had “the potential to open new avenues for research in the field.”

The authors contend that it “still is of high scientific interest whether there exist biological patterns that differ between persons with different sexual orientations” and that it is “paramount to also search for possible functional differences” between heterosexual and homosexual people.

Is that so? When the study was posted on Twitter, it drew a strong reaction from researchers and scientists studying AI. Experts on technology and LGBTQ+ rights fundamentally disagreed with the prospect of measuring sexual orientation by studying brain patterns.

“There is no such thing as brain correlates of homosexuality. This is unscientific,” tweeted Abeba Birhane, a senior fellow in trustworthy AI at Mozilla. “Let people identify their own sexuality.”

“Hard to think of a grosser or more irresponsible application of AI than binary-based ‘who’s the gay?’ machines,” tweeted Rae Walker, who directs the PhD in nursing program at the University of Massachusetts in Amherst and specializes in the use of tech and AI in medicine.

Sasha Costanza-Chock, a tech design theorist and the associate professor at Northeastern University, criticized the fact that in order for the model to work, it had to leave bisexual participants out of the experiment.

“They excluded the bisexuals because they would break their reductive little binary classification model,” Costanza-Chock tweeted.

Sebastian Olbrich, Chief of the Centre for Depression, Anxiety Disorders and Psychotherapy of the University Hospital of Psychiatry Zurich and one of the study’s authors, explained in an email that “scientific research often necessitates limiting complexity in order to establish baselines. We do not claim to have represented all aspects of sexual orientation.” Olrich said any future study should extend the scope of participants.

“Bisexual and asexual individuals exist but are ‘simplified away’ by the Swiss study in order to make their experimental setup workable,” said Qinlan Shen, a research scientist at software company Oracle Labs’ machine learning research group who was among those criticizing the study. “Who or what is this technology being developed for?” they asked.

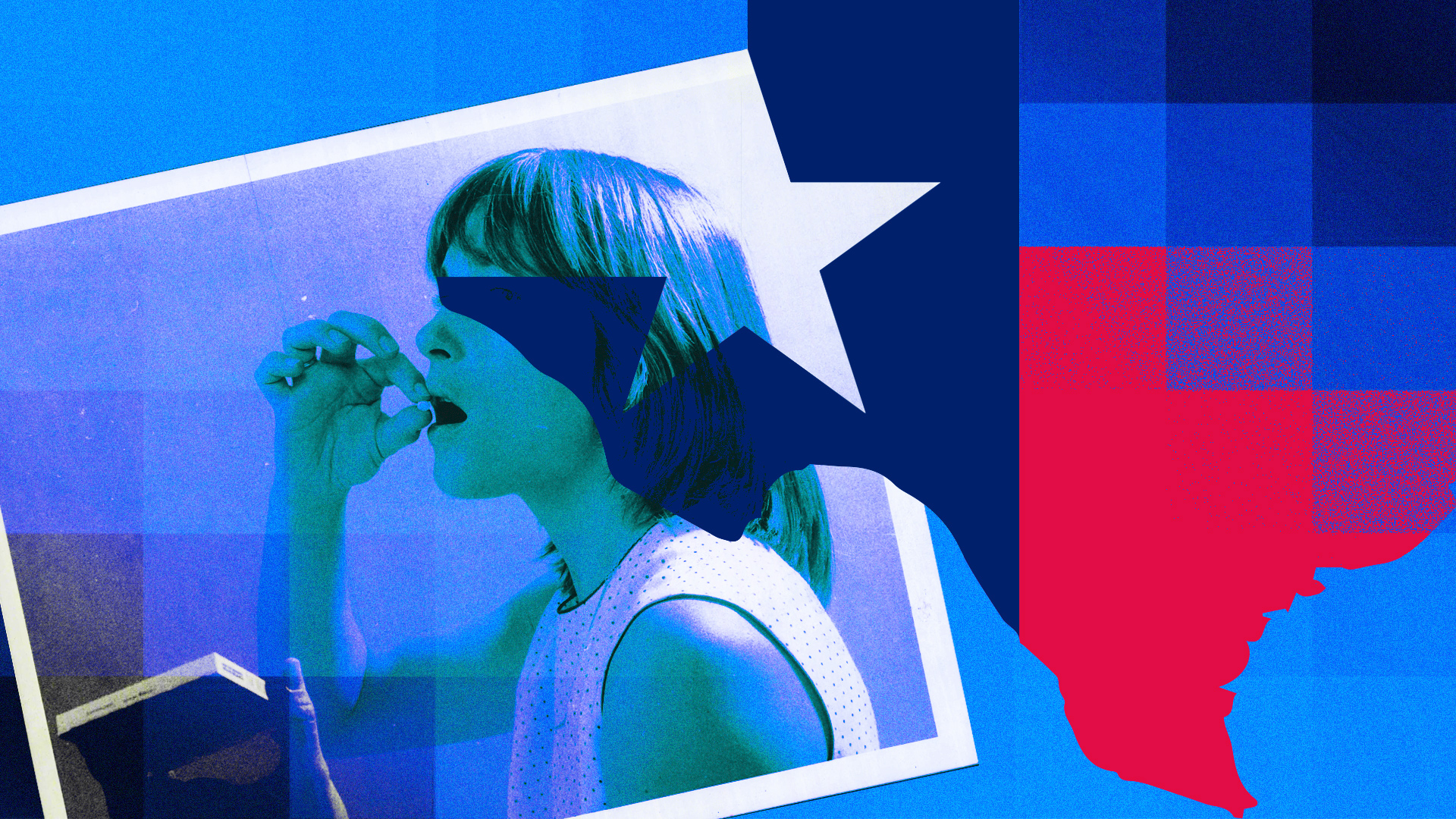

Shen explained that technology claiming to “measure” sexual orientation is often met with suspicion and pushback from people in the LGBTQ+ community who work on machine learning. This type of technology, they said, “can and will be used as a tool of surveillance and repression in places of the world where LGBT+ expression is punished.”

Shen also disagrees with the idea of trying to find a fully biological basis for sexuality. “I think in general, the prevailing view of sexuality is that it’s an expression of a variety of biological, environmental and social factors, and it’s deeply uncomfortable and unscientific to point to one thing as a cause or indicator,” they said.

This isn’t the first time a machine learning paper has been criticized for trying to detect signs of homosexuality. In 2018, researchers at Stanford tried to use AI to classify people as gay or straight, based on photos taken from a dating website. The researchers claimed their algorithm was able to detect sexual orientation with up to 91% accuracy — a much higher rate than humans were able to achieve. The findings led to an outcry and widespread fears of how the tool could be used to target or discriminate against LGBTQ+ people. Michal Kosinski, the lead author of the Stanford study, later told Quartz that part of the objective was to show how easy it was for even the “lamest” facial recognition algorithm to be trained into also recognizing sexual orientation and potentially used to violate people’s privacy.

Mathias Wasik, the director of programs at All Out, has been campaigning for years against gender and sexuality recognition technology. All Out’s campaigners say that this kind of technology is built on the mistaken idea that gender or sexual orientation can be identified by a machine. The fear is that it can easily fuel discrimination.

“AI is fundamentally flawed when it comes to recognizing and categorizing human beings in all their diversity. We see time and again how deep learning applications reinforce outdated stereotypes about gender and sexual orientation because they’re basically a reflection of the real world with all its bias,” Wasik told me. “Where it gets dangerous is when these systems are used by governments or corporations to put people into boxes and subject them to discrimination or persecution.”

The Swiss study was published in June, less than a month after Uganda’s president signed a new, repressive anti-LGBTQ+ law — one of the harshest in the world — that includes the death penalty for “aggravated homosexuality.” In Poland, activists are busy challenging the country’s “LGBTQ-free zones” — regions that have declared themselves hostile to LGBTQ+ rights. And the U.S. Supreme Court just issued a ruling that effectively legalizes certain kinds of discrimination against LGBTQ+ people. Identity-based threats against LGBTQ+ people around the world are clear and present. What’s less clear is whether AI should have any role in mitigating them.

The study’s researchers say that their work could help combat political movements advocating for conversion therapy by showing that sexual orientation is a biological marker.

“Our research is absolutely not intended for use in prosecution or repression — nor would it seem to be a practicable method for such abuse,” said Olbrich. “There is no proof that this method could work in an involuntary setting. It is a sad reality that many technologies can be misused; the ethical responsibility is to prevent misuse, not halt the progress of scientific study.”

He added that the study’s objective was to identify the neurological correlates — not causes — of sexual orientation, in the hope of gaining a more nuanced understanding of human diversity.

“Our work should be seen as a contribution to the larger quest to comprehend the remarkable workings of our neurons, reflecting our behaviors and consciousness. We didn’t set out to judge sexual orientation, but rather to appreciate its diversity. We regret if people felt uncomfortable with the findings,” he said.

“However true these good intentions might be,” said Shen, “I don’t think it erases the inherent potential harms of sexual orientation identification technologies.”

On Twitter, Rae Walker, the UMass nursing professor, was more blunt.

“Burn it to the ground,” they said.